Introduction to DataOps: Bringing Databases Into DevOps

DataOps is the streamlined combination of data development and data operations. Data development, also known as data engineering, comprises the activities involved with engineering and evolving the data aspects of your technical solutions. Data operations comprises the activities to operate, support, and govern the data aspects of your technical solutions.

This article works through the following topics:

- Why DataOps?

- The DataOps lifecycle

- The 7 Pillars of #TrueDataOps

- Critical DataOps Practices

- The DataOps pipeline

- DataOps and DevOps

- Isn’t DataOps really “data DevOps”?

1. Why DataOps?

There are several reasons why DataOps is critical to your organization:

- Data is mission-critical. Quality data is required in different forms, at different times, and in different ways across your organization to enable data-informed decision making.

- Data is an innate component of your systems. Just as the rest of your information technology (IT) group has adopted continuous ways of working (WoW), so must data professionals. Just as data is part of your systems, DataOps is part of DevOps.

- The increasing rate of change. The increasing rate of change, and the corresponding increased demand by your customers, demands an increased level of responsiveness. An effective DataOps strategy shortens the feedback cycle from the time that you recognize a need for new or improved data to the time that you deliver it.

- Increasing complexity. The increasing complexity of our technical environment requires greater automation and more responsive WoW.

- Increasing need for regulatory compliance. The increased importance of data within society motivates greater regulation of data and its usage. We are seeing countries all around the world enacting privacy, artificial intelligence (AI), and other forms of data regulation. The depth and breadth of these evolving regulations requires greater and more integrated automation throughout the entire data lifecycle.

2. The DataOps Lifecycle

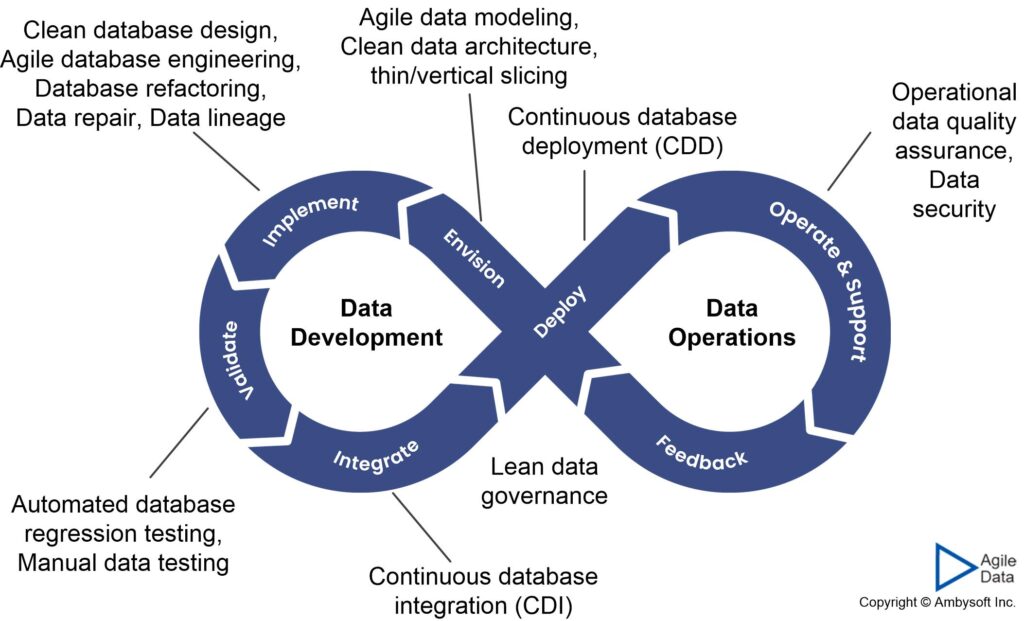

Figure 1 depicts the DataOps process loop. It is shown as an infinite mobius loop to indicate that DataOps is considered a continuous initiative that will last for the life of your organizational data. Data development is shown on the left-hand portion of Figure 1, comprised of activities to envision, implement, validate, and integrate your data assets. Data operations is shown on the right-hand portion of Figure 1, comprised of activities to deploy, operate & support, and provide feedback to development.

Figure 1. The DataOps continuous process loop (click to enlarge).

3. The 7 Pillars of #TrueDataOps

The folks at DataOps.live have some great ideas, based on their real-world experience helping organizations adopt DataOps, that they share in The 7 Pillars of #TrueDataOps. The pillars are overviewed in Figure 2 and described in Table 1.

Figure 2. The 7 Pillars of #TrueDataOps (from DataOps.live) – Click to enlarge.

Table 1. The 7 Pillars of #TrueDataOps.

| #TrueDataOps Pillar | Agile Data Advice |

| ELT and the Spirit of ELT. Prefer to extract-lift-transform (ELT) over extract-transform-lift (ETL). This way, no details are lost in your data warehouse, maximizing the opportunity for accurate data processing in the future. You also want to capture traceability (data lineage) information and change data. The spirit of ELT is to avoid actions that remove data that could be valuable to someone later; in short, future-proof your data. | This is definitely something I need to write more about here on this site, although is a topic covered in Not Just Data: How to Deliver Continuous Enterprise Data.

Additionally, every time you make a copy of data, you degrade performance and inject cost to do so. You also add opportunities for security breaches and quality degradations due to inconsistent values between data copies (an increasing threat as you move towards real-time processing). |

| Agility and CI/CD. Build repeatable and orchestrated pipelines for building/deploying everything data. | Automation of your data processes is key to implementing a DataOps strategy, see the section below. This site includes articles on automated database regression testing, continuous database integration (CDI), and continuous database deployment (CDD). |

| Component Design and Maintainability. Small atomic and testable pieces of code and configuration. | I’ve promoted clean architecture and clean design throughout my career, as they enable extensibility. Agile Data has promoted testability and, more importantly, data quality from the very beginning. |

| Environment Management. Branching data environments like we branch code. | Configuration management is key to treating your work, and your data, like assets. |

| Governance, Security, and Change Control. Grant management, anonymization, security, auditability, and approvals. | I first started working on concepts around lean governance at IBM in the late-oughts, and quickly adapted that thinking for lean data governance.

The site does need an article or two about the importance of data auditability and data security issues. These topics are on my backlog. |

| Automated Testing and Monitoring. Test-driven data development, automated test cases, and automated, continuous monitoring of production. | Agile Data was certainly one of the first, if not the first, methods to promote the practice of test-driven data development (TDDD).

This site doesn’t have much material on operational data monitoring as it was covered in the Enterprise Unified Process (EUP) and then the Disciplined Agile (DA) toolkit. This topic, along with observability, is also on my backlog. |

| Collaboration and Self-Service. Enable data access and cross-team collaboration across the entire organization, for end users and data professionals, while maintaining governance. | Hell yeah! My book Not Just Data: How to Deliver Continuous Enterprise Data describes how to do exactly that. |

4. Critical DataOps Practices

Figure 1 above indicates what I believe to be key practices supporting DataOps. These practices are:

- Agile data architecture. Data architecture is the foundation of a data strategy that supports your organization’s goals and priorities. Agile data architecture does so in a collaborative and evolutionary (iterative and incremental) manner.

- Agile data modeling. Data modeling is the act of exploring data-oriented structures. Agile data modeling is data modeling done in an evolutionary and collaborative manner.

- Agile database engineering. The work required to technically implement data assets, including data stores, data tooling, data transmission, and other components.

- Automated database regression testing. The validation of a data asset, in particular a database, in an automated manner. This is achieved through the creation of a test suite, comprised of automated tests, that is invoked via a testing tool.

- Continuous database deployment (CDD). Continuous deployment (CD) is the automatic deployment of a solution once it has passed any requisite quality criteria. CDD is CD of a data store.

- Continuous database integration (CDI). Continuous integration (CI) is the automatic invocation of the build process for an asset. CDI is CI of a data store.

- Data lineage. Data lineage is the act of fully tracing a data element through the processing steps performed on it from source to destination.

- Data repair. Fixing data quality problems at the actual source, such as an existing production database.

- Data security. Data security is the practice of protecting digital information from unauthorized access, corruption or theft throughout its entire lifecycle.

- Database refactoring. A database refactoring is a simple change to a database schema that improves its design while retaining both its behavioral and informational semantics.

- Lean data governance. The goal of data governance is to ensure the quality, availability, integrity, security, and usability within an organization. A lean data governance approach promotes a healthy, collaborative relationship between data professionals and the teams that they’re supporting.

- Manual data testing. The validation of a data asset in a non-automated manner.

- Operational data quality assurance. The ongoing monitoring and verification that operational data and its supporting infrastructure meets or exceeds the quality of service (QoS) requirements set for it.

- Test data generation. Tooling and procedures to generate artificial data for testing purposes.

- Thin/vertical slicing. The organization of deliverables into “thin slices” of consumable value that may be deployed into production quickly. These slices are completely implemented – the analysis, design, programming, and testing are complete – and offer real business value.

Yes, there are many more data-oriented practices that you will adopt to make DataOps work in practice, but the ones listed above are the critical ones in my opinion.

5. The DataOps Pipeline

Your DataOps pipeline is the combination of technologies that you use to automate the orchestrated activities of the DataOps lifecycle. Figure 3 shows the types of activities commonly automated. Note that this automation may not be 100%; for example, you may not have automated (yet) all of your database tests. Also note that Figure 3 identifies categories of work that should be automated, such as CDI and CDD, but it does not specify the tooling to do so. A quick Internet search will soon reveal many tool for each category.

Figure 3. Automation throughout the DataOps pipeline (click to enlarge).

Notice how Figure 1 and Figure 3 differ. Figure 1 focuses on high-level activities, in particular those that reflect agile data ways of working (WoW). Figure 3 reflects activities that are typically automated, some of which reflect traditional WoW (although hopefully performed in an agile manner) and some of which are clearly “new” agile WoW. Techniques such as database refactoring don’t appear on Figure 3 because very little of this technique is part of your DataOps pipeline. The portions of the work that would be included in your pipeline would be the scripts to deploy a refactoring when it is first implemented and the scripts to remove the old schema (if any) and scaffolding once the transition period has ended. Although these are both very important things, they’re actually very small parts of the overall refactoring work.

6. DataOps and DevOps

A fundamental question is how do DataOps and DevOps relate to one another? Here is how:

- DataOps is a critical subset of DevOps. Just like data is a critical aspect of your systems, data ways of working (WoW) are critical aspects of your overall WoW.

- Many of the DataOps development practices are more complex. It isn’t simply a matter of taking the names of common DevOps practices, such as automated regression testing and continuous integration, and sticking the word database into them. Because databases persist data, the engineering practices for them are more complicated than the corresponding non-database practices. For example, automated database regression testing must ensure that tests put the database back into the pre-test state (e.g. reset the data values), otherwise there could be side effects that derail other tests.

- Most of the Ops side of DevOps is data operations. Just saying.

7. Isn’t DataOps Really Data DevOps?

Yes, DataOps should be more accurately called Data DevOps. DataOps is a much sleeker marketing term, and marketing tends to win out over accuracy in practice. Although I have used the terms “Data DevOps” and “Database DevOps” since around 2018, I’ve decided to abandon them in favour of DataOps.

8. Related Resources