Clean Database Design: Strategies to Increase Data Agility

- What is clean database design?

- Why clean database design?

- Data normalization

- Clean database design is fit-for-purpose

- Clean database designs are technically future proofed

- Clean database design in context

- Related resources

1. What is Clean Database Design?

Clean database design is the application of proven heuristics that lead to high quality. In clean database design data entities (such as tables) are loosely coupled and appropriately cohesive. Loosely coupled means that there is the minimal number of relationships from one data entity to others. Appropriately cohesive means that the right data is captured by a data entity for the desired level of normalization. Normalization refers to the level of redundancy within a data entity (more on this below).

2. Why Clean Database Design?

A cleanly designed data source is easy to understand, test, and evolve. This in turn enables agility.

3. Data Normalization

A critical consideration in clean database design is normalization. There are many ways to describe data normalization, from straightforward to highly mathematical. Naturally I lean towards straightforward strategies. In a nutshell, here’s what you need to aim for:

- Data is stored in one and one only place. A database design is said to be denormalized when data is stored in multiple places and normalized when data is stored in one place only. Figure 1 depicts a “denormalized” way to store the data for an order in a point of sale (POS) system. All of the information required to produce an invoice, or some other form of report, for that order is stored in a single row of a single table. Denormalization makes it very easy and fast to obtain all of the information for a specific purpose, in this case working with an order. Figure 2 depicts a highly normalized way to store the data for an order. Normalization makes it easier to edit data because information is stored in one place only. For example, with the normalized version of order someone’s name and address are stored in one place. In the denormalized version (Figure 1) the name and address are stored with each order, making it difficult to edit that data if someone’s address changes because their information is stored in multiple places.

- Tables and columns are cohesive. What I mean by that is that a table (or data structure in non-relational data stores) should store data for a single entity type. For example, the Order0NF table in Figure 1 stores data pertinent to orders, order items, contacts, and so on. That’s not cohesive. In Figure 2 the Order table stores only information about orders. Similarly, a column (or a data element) should be used for a single purpose.

- Tables are loosely coupled. The primary source of coupling between tables are relationships. A relationship should exist between two tables only if it needs to be traversed (used in a join) to satisfy a business need. Otherwise, be wary of maintaining it. For example, there is a real-world relationship between City and State – a city is in one or more states (some cities cross the border between two states, and sometimes even between countries). But, that relationship isn’t shown in Figure 2 because we haven’t yet run into a requirement for us to traverse that relationship. One day we might, and at that point we’ll evolve the schema and populate the relationship but until then we don’t need the overhead of building and maintaining it.

Figure 1. A denormalized schema for an order – Optimized for reporting (UML Notation). Click to enlarge.

4. Clean Database Design is Fit-for-Purpose

Clean database design requires a contextualized, fit-for-purpose approach. By that I mean that the primary purpose of your database should drive its overall design strategy. There are two categories of database to consider:

- Online analytical processing (OLAP). Reporting databases, such as data warehouses, typically reqire a denormalized design to be performant. Figure 1 depicts a table from such a database, in this case for orders.

- Online transaction processing (OLTP). Transactional databases typically require a highly normalized design to be accurate. This means that the data for a single entity, in this case an order, is stored in multiple tables and then recombined as needed. In Figure 2 entity types such as order, item, and contact are stored in individual tables. This makes it easier to update the information pertinent to each entity type.

5. Clean Database Designs are Technically Future Proofed

“Future proofing” means that you architect or design something to stand the test of time. In the case of a database, you want to design it to meet your future requirements. Unfortunately, you don’t know how the business requirements will evolve in the future, and trying to predict them tends to result in big design up front (BDUF) which results in solutions that are difficult (slow and expensive) to evolve. So don’t do that. Instead, focus on addressing common change cases and common technical challenges that aren’t specific to your business domain. These common change cases are:

- History. We need to know the specific data values at a specific point in time

- Inconsistency. We must work with data sources that are built by different people within their unique context.

- Complexity. The world is becoming more complicated.

- Timeliness. The speed of business is accelerating.

- Consumability. We need to support a larger range of data access technologies and styles.

There are several strategies for future proofing your database design to ensure you address the common change cases that you (will) face:

- Implement truly unique surrogate keys. A surrogate key is a key that does not have business meaning. A “truly unique” surrogate key is one that has a unique value across all entities. For example, if a Customer record has a key value of 1701 then no other record has that value, including non-employee records. In Figure 3 you see that I have introduced Item_ID, which is a hash key (a common approach to unique surrogate keys), as the primary key for Item. I kept the ItemNumber, which is a unique business/natural key, as that is how people identify items. For more about key strategies, read Choosing a Primary Key: Natural or Surrogate?.

- Maintain historical data values. You want to maintain historical data values so that you can determine the state of an entity at any given point in time. This may be important for regulatory compliance concerns, to identify trends over time, or to provide superior customer service. The easiest way to do this is to add a history table corresponding to the “current” table, in this case Item_History. In Figure 3 you see how I’ve done this. Item_History has all of the data fields of Item, plus Begin_DT and End_DT to indicate the time range during which the data values were applicable. A row is added to this table every time there is an update to the Item table, copying the existing (pre-update) values into history table. Item.LastUpdate_DT is used as the Item_History.Begin_DT value and the current datetime is used for Item_History.End_DT and the new value for Item_LastUpdate_DT.

- Implement soft deletes. A soft delete is the act of marking a record as deleted, but leaving it in the table. A hard delete is an actual deletion of that data. The easiest way to do this is with a delete marker, such as a boolean column named Is_Deleted or Is_Active. Soft deletes enable you to support full history of an entity as well as to be regulatory compliant. However, many countries now have a “right to be forgotten” which means that certain types of data are required to be completely removed (hard deleted).

- Set and follow common conventions. My experience is that data naming conventions can be one of the most important drivers of understandability of your schema. For example, in Figure 3 you see that I’m following a consistent set of naming conventions. First, I’m using full English words for the names of things. Second, “technical aspects” that support future proofing strategies involve an underscore, such as Item_History or End_DT. Third, multi-word names use CamelCase format, for example ItemName. Your organization will want to develop its own set of conventions, or adopt conventions from an external source if appropriate. My advice is to agree to a reasonable set of conventions and then apply them consistently.

Figure 3. A future-proofed data schema (UML Notation). Click to enlarge.

6. Clean Database Design in Context

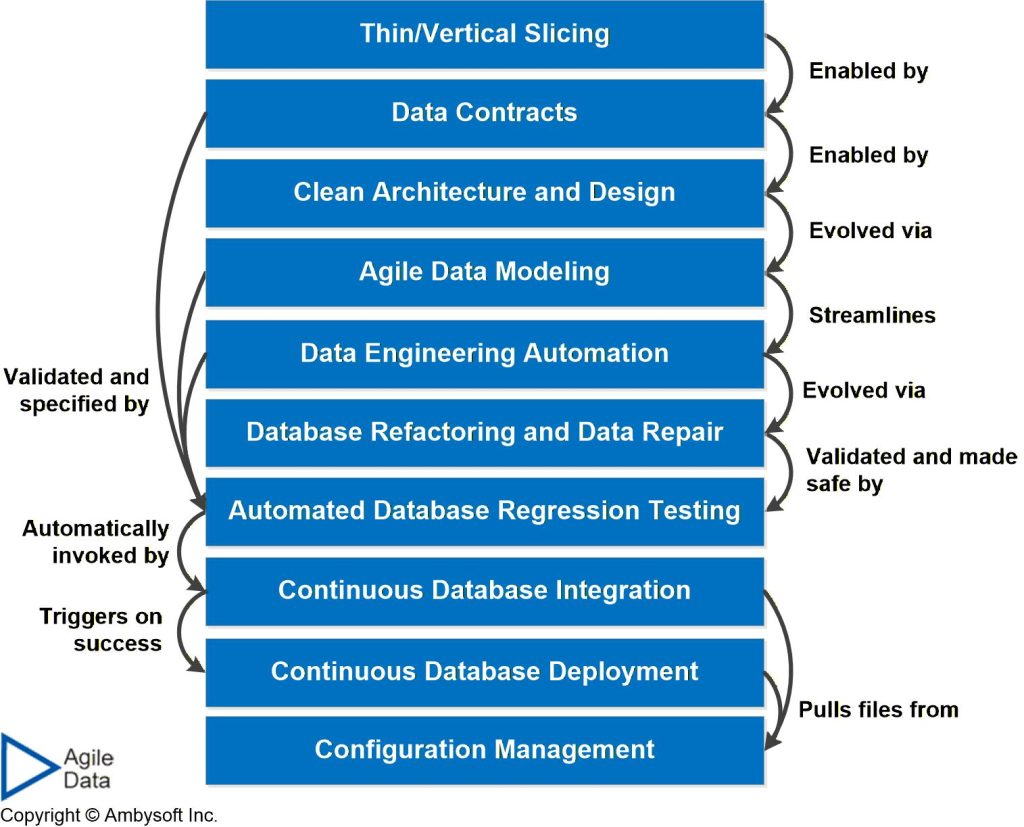

As you see in Figure 4 clean architecture and database design enables you to thinly slice what you deliver to your stakeholders into small increments. Clean database design is evolved via agile data modeling. Your database design is kept clean, or is cleaned up, via database refactoring.

Figure 4. The agile database techniques stack (click to enlarge).

The following table summarizes the trade-offs associated with clean database design and provides advice for when to adopt it.

Table 1. Clean database design in context.

| Advantages |

|

| Disadvantages |

|

| When to Adopt This Practice | My knee-jerk reaction is to say always, but that wouldn’t be accurate. Sometimes time is of the essence and it makes sense to accept technical debt now and decide to pay it down in the future. Hopefully that is rare decision that when it is made is a prudent and deliberate one. |